Privacy by Design means building data protection into software architecture from the start, not bolting it on later. At QUALLEE, this includes: granular consent management with audit trails, automatic data deletion based on defined retention periods, self-service for data subject rights, and full transparency in AI processing. This article shows the concrete technical implementation.

Why Data Protection Is Architecture

Most software products treat data protection like a checklist to tick off before launch. Cookie banner here, privacy policy there, maybe a consent popup. Done.

Until someone asks where exactly the consent is stored. Until a user wants to export their data. Until the regulator wants to know how long which data is retained and why.

Retrofitting data protection is more expensive than building it in. That was the thinking when we built QUALLEE. Privacy by Design isn't philosophy; it's an architectural decision you either make at the start or pay to fix later.

The Seven Principles by Ann Cavoukian

Dr. Ann Cavoukian formulated Privacy by Design in the 90s. Seven principles that were recognized as a standard by international data protection authorities in 2010. The GDPR adopted them in Article 25.

The principles sound abstract: "Proactive not reactive", "Privacy as the default", "End-to-end security". In practice, they mean concrete decisions.

Proactive not reactive means: Before we build a feature, we ask what data it needs and for how long. Not when someone asks about retention periods.

Privacy as default means: If a user does nothing, their data is still protected. No opt-out traps, no pre-checked marketing boxes.

Privacy embedded into design means: Data protection isn't a component you toggle on or off. It's in the database schema, in the API endpoints, in the UI components.

AI Products and the Trust Problem

AI products have an image problem with data protection. Headlines about training data, about models reproducing personal information, about opaque data flows have left their mark.

The problem is real, but not inevitable. AI-powered products can be privacy-compliant; they just require more care in architecture.

At QUALLEE, interview data flows through AI models from Anthropic and OpenAI. That's the core of the product. At the same time, we've drawn clear boundaries.

Raw data stays on our servers in Germany. Only the text content necessary for analysis goes to the AI APIs, transmitted encrypted. Both providers have signed Data Processing Agreements that exclude use for model training. We've documented and filed the DPAs from OpenAI, Anthropic, Hetzner, Stripe, and Resend.

The AI analyzes what people say; it doesn't analyze how they look. No facial recognition, no emotion detection from video or audio. This has been prohibited in certain contexts since February 2025 under the EU AI Act, but we built it that way from the start.

Consent Management Under GDPR Article 6

The GDPR distinguishes different legal bases for data processing. Article 6 lists them. Two are particularly relevant for us: contract performance and consent.

When a paying customer uses the analysis chat, we process their data based on contract performance. They've subscribed, the analysis is part of the contract. A notice that AI is being used is sufficient. No modal, no checkbox, no storage.

It's different when third-party data comes into play. When a researcher uploads transcripts containing interview participant statements. Or when someone imports core questions that may contain personal information. Here we need explicit consent, and we must store it.

The distinction sounds academic but has practical consequences. An info notice is a sentence of text in the UI. A stored consent is a database entry with timestamp, IP address, user agent, and version number of the consent text.

| Location | What's Required | Rationale |

|---|---|---|

| Analysis chat | Info notice | User is contract party |

| Support chat | Info notice | Contract performance |

| Core questions import | Consent + storage | Third-party data possible |

| Transcript upload | Consent + storage | Third-party data (interviewees) |

| Interview participation | Consent + storage | Participant is not contract party |

Technical Implementation of Consent Management

Consent management needs infrastructure. For us, that's two database tables, several API endpoints, and a React hook.

The UserAiConsent table stores consents from registered users. Each entry contains the consent type, the time of granting, optionally the time of revocation, IP address, user agent, and the version of the consent text. The combination of user ID and consent type is unique; a user can only have one active consent per type.

The IntervieweeConsent table is for interview participants. They're not registered users, so they don't have a user ID. Instead, we link the consent to the session ID. Additionally, we store a SHA-256 hash of the displayed consent text. This lets us prove later exactly what text the participant saw.

The useAiConsent React hook makes consent management usable in the frontend. It checks on component load whether consent already exists. If not, it shows a modal. After confirmation, it saves the consent via the API and remembers the status for the session.

const { hasConsent, grantConsent, showConsentModal } = useAiConsent("import_questions")

// Before an action requiring consent:

if (!hasConsent) {

setShowConsentModal(true)

return

}

// Consent exists, proceed with action

For revocation, we don't delete the consent entry but set a revokedAt date. The GDPR allows consent records to be retained even after revocation; they're part of the documentation obligation.

AI Transparency Under the EU AI Act

The EU AI Act has required since August 2025 that users be informed when they interact with an AI system. We built it that way from the start.

The ConsentDialog for interview participants makes unmistakably clear that an AI system is conducting the interview. The text is available in five languages: German, English, French, Spanish, Italian. No machine translation, but localized texts.

The dialog contains three central pieces of information: Data is stored encrypted on a German server. Processing is done by AI models. Data is not used for AI training.

In addition to the consent checkbox, there's a captcha verification. This isn't a GDPR requirement but protection against automated access.

In the dashboard, AI notices are less prominent but present. A small text above the chat: "AI-powered analysis". No modal, no popup; the user knows without being interrupted.

Automatic Data Deletion by Retention Period

Setting retention periods is easy. Following them is harder.

The GDPR requires in Article 5 that data not be kept longer than necessary. That sounds obvious but regularly fails in practice. The study is finished, the data is still somewhere, nobody deals with it.

We've set up a cronjob that runs daily at 02:30. It checks three categories: Support tickets older than 24 months are deleted. Archived interview sessions older than 12 months are deleted. Consent records from deleted users are removed after 36 months.

The job runs in dry-run mode when started manually. It shows what would be deleted without actually deleting. Only with the --execute flag is data actually removed.

| Data Type | Retention Period | Automatic Deletion |

|---|---|---|

| Support tickets | 24 months | Yes |

| Archived sessions | 12 months | Yes |

| Consent records | 36 months after deletion | Yes |

| Project data | Project duration + 12 months | On project deletion |

Data Subject Rights as Self-Service

The GDPR gives people rights over their data: Access (Art. 15), Rectification (Art. 16), Erasure (Art. 17), Data portability (Art. 20). In many products, these are manual processes: The user writes an email, someone manually exports the data, sends it as a ZIP file.

For us, these are features in account settings.

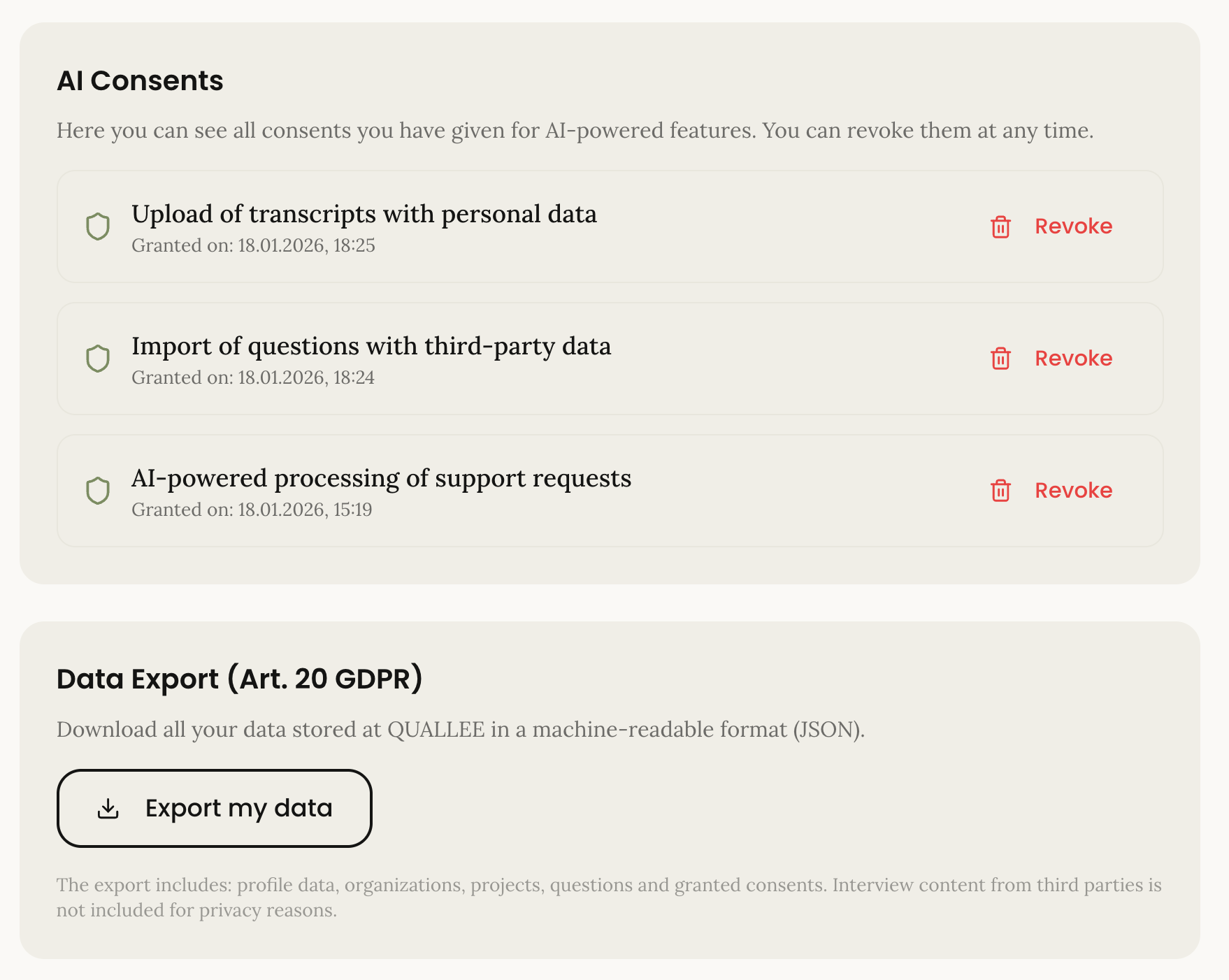

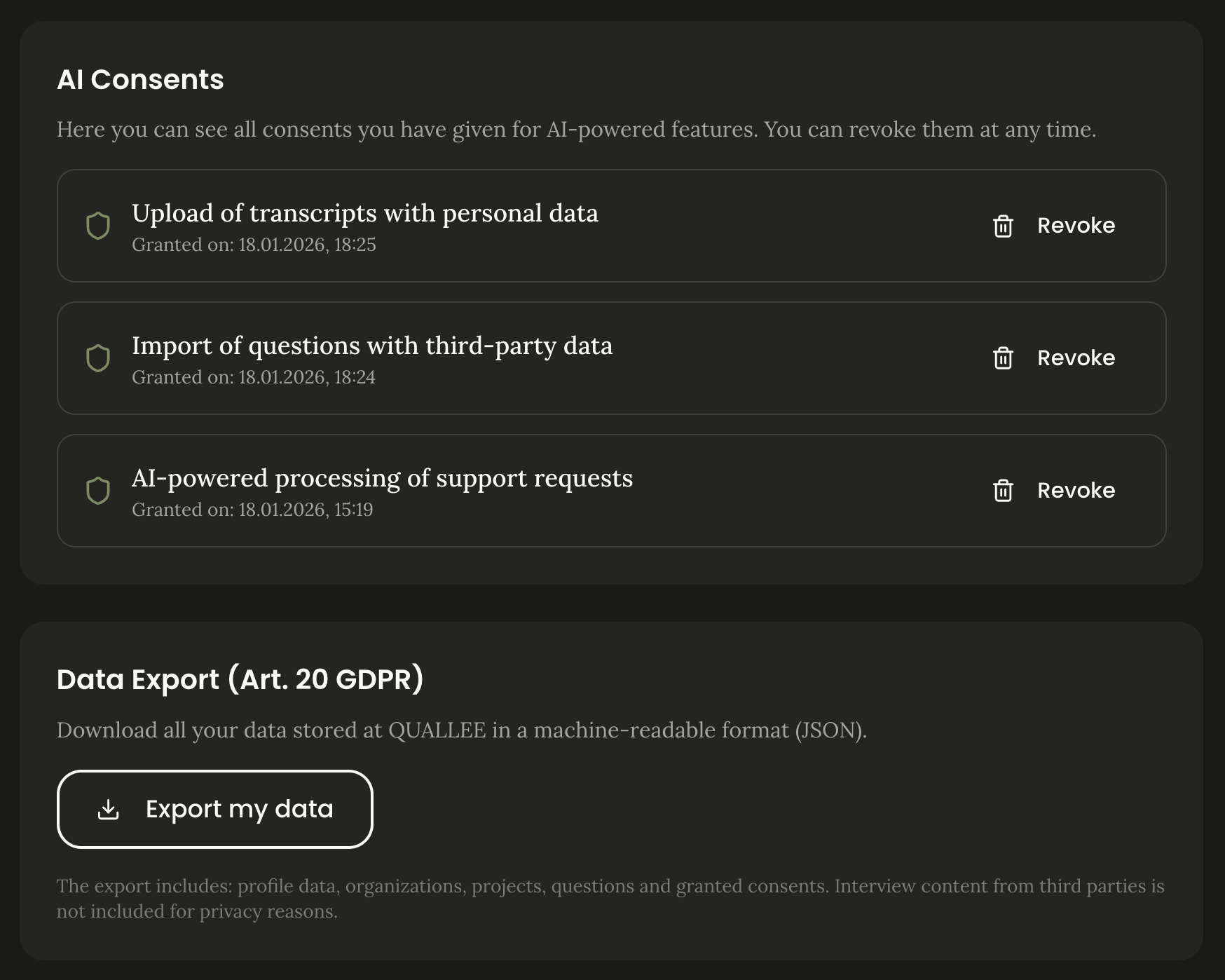

The privacy page shows all granted consents. Users can revoke them individually. Revocation is as easy as granting; that's what Article 7(3) of the GDPR requires.

The data export generates a JSON file with all personal data: Account information, projects, granted consents. Machine-readable, as Article 20 requires.

Account deletion is a soft delete with anonymization. We remove personal data but keep anonymized project data if relevant for other team members. Consent records are retained; Article 17(3) explicitly allows this.

What We Learned from This

Privacy by Design costs time. Building the consent infrastructure took longer than a simple cookie banner. Automatic deletion requires more planning than "we'll delete when someone complains".

This investment pays off, not primarily through avoided fines (though GDPR penalties exceeded 2 billion euros in 2025), but through trust. Researchers work with sensitive data. They want to know their participants are protected. A traceable data protection concept is a selling point.

The EU AI Act raised the requirements, not changed them. Those who build their AI products transparently and data-sparingly from the start won't need to scramble in August 2026.

Frequently Asked Questions

What is Privacy by Design?

Privacy by Design is a concept by Dr. Ann Cavoukian that calls for building data protection into system development from the start, rather than adding it later. The GDPR enshrined it in Article 25 as "data protection by design and by default".

How does an info notice differ from explicit consent?

An info notice is sufficient for contract performance (Art. 6(1)(b) GDPR) when the user themselves is the contract party. Explicit consent with storage is required when third-party data is processed or the data subject is not a contract party.

How long must consent records be retained?

The GDPR doesn't specify a concrete period, but companies must be able to prove consent was given. 3 years after revocation or contract end is recommended, matching the civil law limitation period.

Is my data used for AI training?

No. Both OpenAI and Anthropic have signed Data Processing Agreements that exclude use of API data for model training. Raw data stays on German servers.

Try It Yourself

QUALLEE conducts AI-powered interviews that are privacy-compliant from the start. German servers, documented DPAs, automatic retention periods, transparent consent processes. An interview takes about 20 to 30 minutes.