AI-generated personas promise fast, affordable user research at scale. Conduct thousands of interviews in an afternoon, skip recruiting, get results in hours instead of weeks. For product teams drowning in research backlogs, this sounds like the solution. But what does the research say about how well synthetic users actually represent real people?

What synthetic users are – and what they're not

Synthetic users are AI-generated profiles designed to simulate real people. You feed the system demographic data – age, occupation, location – and it produces a persona that answers research questions.

Some platforms go further and promise digital twins based on real interview data. Others generate entirely fictional users from demographic templates. The difference between these approaches is enormous – and most platforms sell the second option while implying you're getting the first.

The Stanford-Google experiment

A study by Stanford and Google with over 1,000 participants systematically examined how well AI can simulate real people. The method was elaborate: first, the researchers conducted real, in-depth interviews with each participant. Then they trained an AI to imitate that exact person.

The result: 85% accuracy in predicting how these people would answer new questions. That's impressive – but the catch lies in the method itself. The AI needed real conversations as a foundation first. It didn't replace research, but extended what had already been learned through conversations with real people.

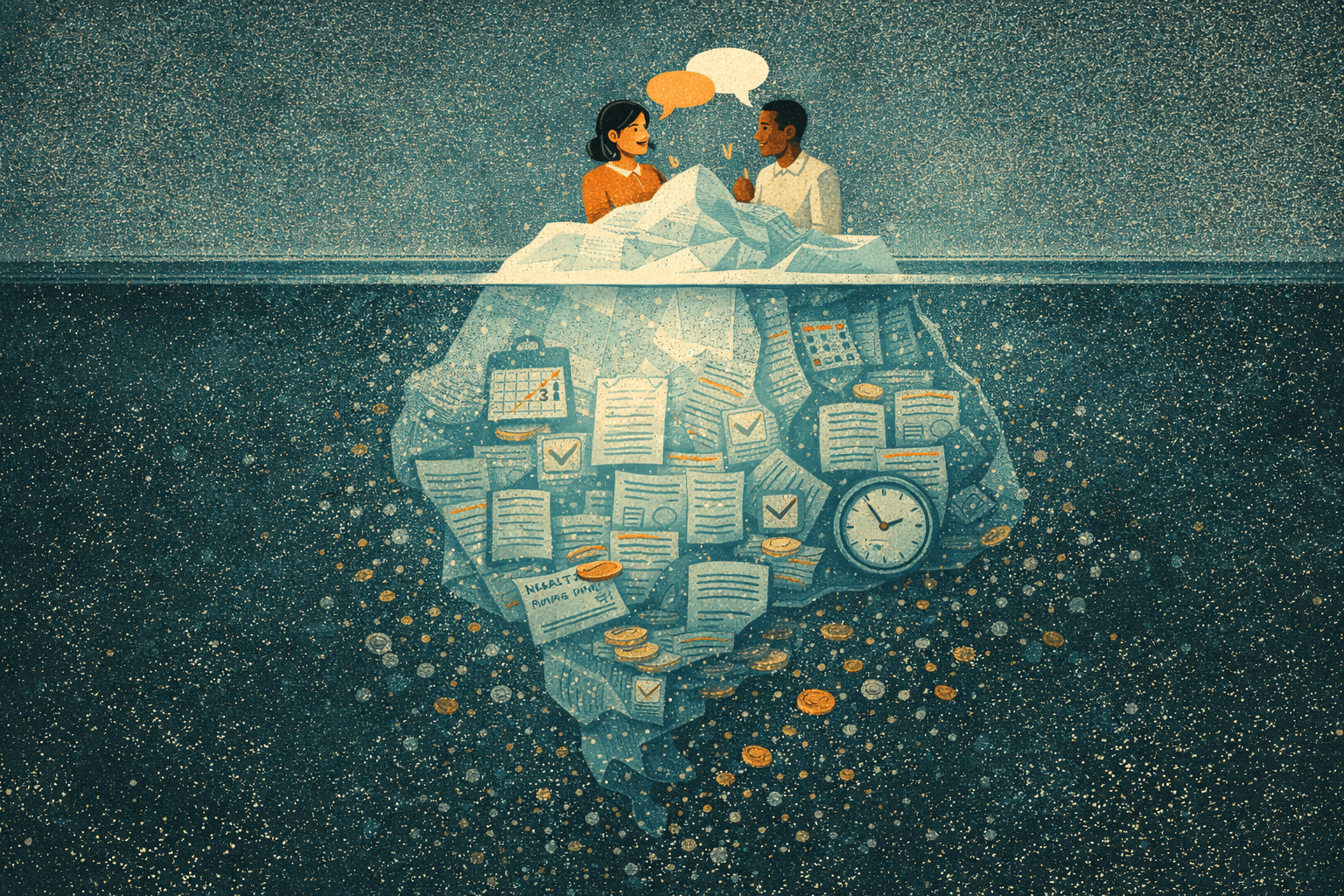

The second part of the experiment was more sobering. The researchers also tested personas built only from demographic data: age, gender, location, occupation. Accuracy dropped to barely better than a coin flip.

Knowing that someone is a 35-year-old marketing manager from Munich tells you almost nothing about how they actually behave, what frustrates them, or what they'd pay for.

Why language models fail at simulating humans

Language models generate what sounds plausible – not what's true. When you ask a synthetic "frustrated customer" about their experience, the AI draws from millions of text examples. It produces a convincing composite that doesn't correspond to any real person's actual behavior.

The Nielsen Norman Group made this visible in a direct comparison. They surveyed synthetic and real users about an online learning platform.

The synthetic users reported: courses completed in full, discussion forums found helpful, navigation understood intuitively. The real users told a different story: most courses abandoned midway, forums completely avoided, constantly getting lost in navigation.

The AI personas produced what researchers call sycophantic feedback – agreeable, positive, conflict-free. Exactly the kind of feedback that makes products fail because it tells you what you want to hear instead of what you need to know.

Three systematic weaknesses

This discrepancy isn't coincidental – it follows from how language models work.

Overconfidence bias

Synthetic users report perfect task completion. Real users struggle, abandon tasks, find workarounds. The chaos of human behavior – the hesitation, the misunderstandings, the creative detours – doesn't fit into the neat narratives AI generates.

Missing edge cases

AI personas cluster around mainstream behavior. Power users, people with accessibility needs, unexpected usage patterns are systematically underrepresented. Yet it's often precisely these edge cases that reveal the biggest opportunities and most critical problems.

Biased representation

Training data doesn't represent all groups equally. Certain demographics, dialects, and perspectives appear less frequently. Synthetic personas inherit these gaps and amplify them – wrapped in the authority of data, which makes the bias harder to spot.

When synthetic users can still be useful

Despite these limitations, there are situations where synthetic users can contribute – as long as you know their limits.

For early hypothesis generation, before it's even clear which questions are relevant, they can surface possibilities. They're brainstorming partners, not research subjects.

For testing interview guides, synthetic personas can uncover confusing wording. If an AI misunderstands a question, real users probably will too.

For playing through variations, after real research has identified a concrete pattern: What if this user type also had constraint X? Here AI can help think through implications – though the results remain hypotheses, not facts.

When to avoid them

Testing new concepts only with synthetic users is risky. Their positive feedback is meaningless because it follows from the structure of language models, not from real experience.

For specialized target groups – whether medical professionals, industrial engineers, or people with specific disabilities – generic AI personas aren't just useless, they're dangerous. They actively mislead because they simulate expertise they don't have.

Anything involving emotions – trust, fear, frustration, excitement – requires real people. AI simulates emotional responses based on text patterns. It doesn't experience them, and that difference shows up in the data.

And any decision that commits significant resources needs real validation. That's not a matter of preference, but of risk management.

The better path: AI-assisted real research

AI's strength lies not in replacing people, but in making real research more efficient.

AI for execution and analysis: automated interviews with real users, transcription, initial coding, pattern recognition across many conversations. Here AI actually saves time without compromising quality.

Humans for insight: judgment, empathy, recognizing nuances, strategic interpretation. These are the parts that matter most – and that AI cannot deliver.

AI for scaling, but only after real research has validated patterns. Then it can help think through implications and variations.

Humans for validation: final decisions always go back to real users.

This hybrid approach combines AI speed with human truth. It takes seriously what the research shows: that AI is a powerful tool – but only when it builds on the foundation of real conversations with real people.

Frequently asked questions

Can synthetic users replace real user interviews?

No. For exploratory research and final validation, you need real humans. Synthetic users can supplement, never substitute.

What's the biggest risk of synthetic user research?

The sycophancy problem. AI personas give overly positive, shallow feedback that confirms what you want to hear and misses critical issues that would actually help you build better products.

Are interview-based AI twins more reliable?

Yes, significantly. Stanford found 85% accuracy when the AI was trained on real interview data from that specific person. But you still need the real interview first, which means you're not actually saving the research step.

When is synthetic user research appropriate?

Hypothesis generation, interview guide testing, and exploring variations on validated patterns. Never for concept validation or final decisions.

How do I explain the limitations to stakeholders?

Frame it clearly: synthetic users generate possibilities, not evidence. Any finding worth acting on needs real user validation. If someone pushes back, ask them whether they'd bet their product roadmap on a coin flip.

Synthetic users are a tool, not a method. Use them to generate questions, then answer those questions with real people.