Surveys reach fewer people every year. And those who do respond click through rather than think. The format that served as the default tool for user feedback for decades is hitting a structural limit – and a controlled study from the University of Mannheim shows that AI-moderated interviews produce markedly richer, more differentiated, and cleaner data than traditional questionnaires.

Anyone looking to improve a product reaches for a survey almost by reflex. Ten questions, a Likert scale block, an open text field at the end. The appeal is obvious: quick to build, cheap to distribute, easy to quantify. Thousands of responses in a week, charts in the quarterly report – everything looks data-driven and valid.

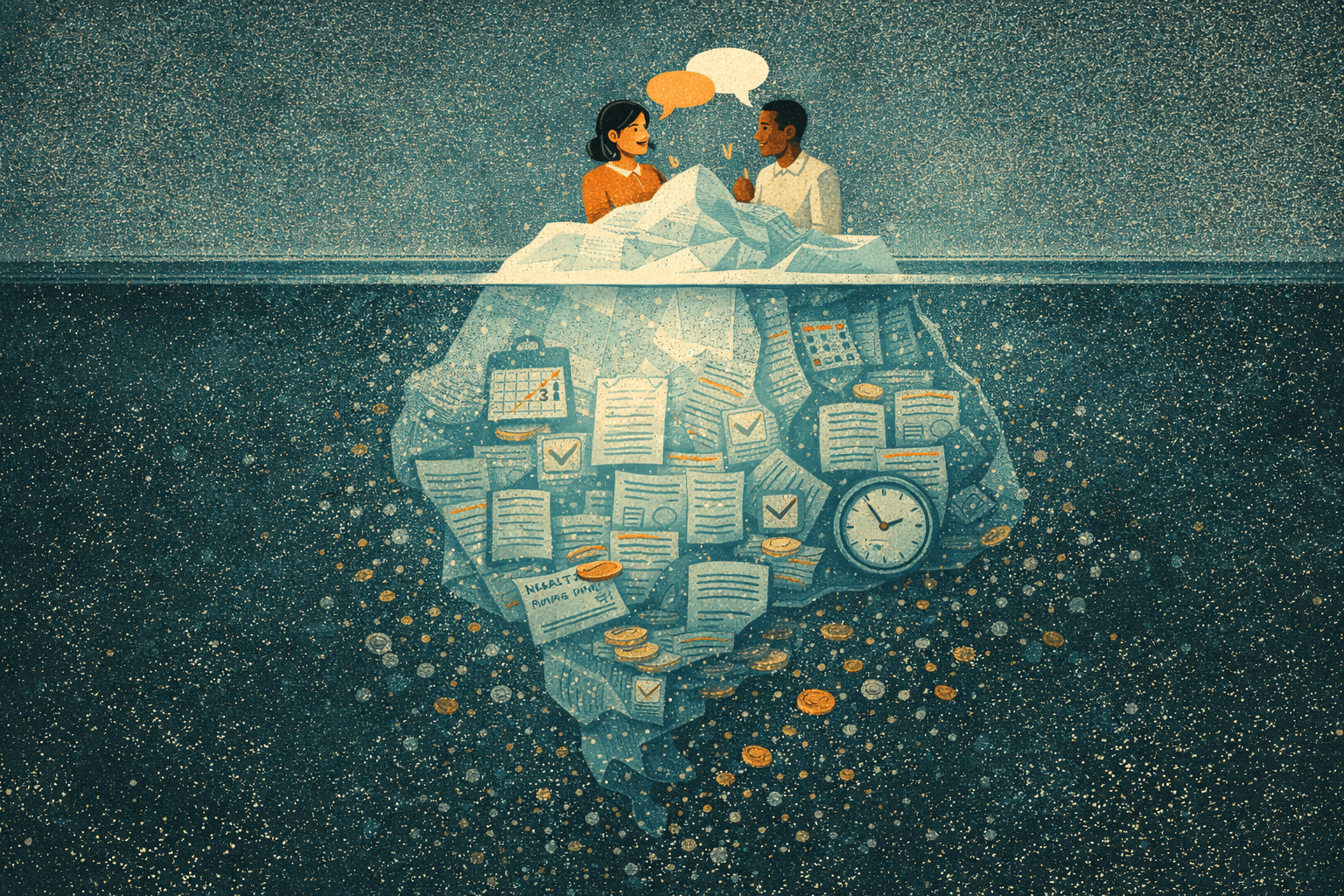

The trouble starts when you try to work with the results. The numbers say two thirds are satisfied. But why the other third is dissatisfied remains unclear: what exactly bothers them, where the moment of frustration lies, which expectation went unmet – none of that shows up in the data, because the format can't capture it.

What surveys never could

Surveys measure distributions. They show how many people choose a given answer. What they don't show: why someone answers the way they do. The distinction sounds technical; it's fundamental.

A Likert scale from 1 to 5 forces a nuanced opinion into a category. According to a PLOS ONE study, this produces significant information loss – discrete categorisation simplifies what resists simplification. Then there's social desirability bias: people answer the way they think they're expected to, not the way they actually think. PNAS researchers showed that these distortions aren't edge cases but systematically compromise self-reports.

Add acquiescence – the tendency to agree with statements regardless of content – and the avoidance of extreme positions, especially on sensitive topics. What ends up in the database isn't the respondent's opinion; it's an artefact of the format.

The open text field at the end of the survey – "Anything else you'd like to add?" – is supposed to close that gap. In practice, hardly anyone types more than a sentence, if that. After 15 minutes of checkboxes, nobody invests another minute in free text.

The numbers behind the decline

Survey response rates have been falling for two decades. What Pew Research documented for phone surveys – a drop from 36 to six percent between 1997 and 2018 – is now repeating with online surveys. Email-based surveys still reach 15 to 25 percent of recipients according to Clootrack, trending downward.

Those who do respond are increasingly half-hearted about it: 70 percent of respondents have abandoned a survey before finishing it. The data that ultimately lands in the analysis doesn't reflect opinions; it reflects impatience.

A controlled study from the University of Mannheim puts the difference into numbers. Aylin Idrizovski and Dr. Florian Stahl had 100 people answer the same questions each – one half via a classic online questionnaire, the other in an AI-moderated interview. The interview format produced 39 percent more words per response, 36 percent more identified themes, and considerably higher lexical diversity. Nonsensical responses – so-called gibberish – didn't occur in the interviews at all; in the questionnaires, they accounted for ten percent of answers.

Participants also rated the AI interviews with higher satisfaction, reported a stronger sense of being heard, and showed greater trust in how their data was handled.

What AI-moderated interviews do differently

A questionnaire is a monologue with checkboxes; an interview is a conversation. The kind of information that emerges is fundamentally different.

In an AI-moderated interview, the participant responds to an open question – and the AI follows up. Not with a pre-defined follow-up, but with one that arises from the answer itself. When someone says "the checkout was frustrating," the AI doesn't ask "how frustrating on a scale of 1 to 5?" but "what exactly frustrated you?" If the response stays superficial, it probes again. Five, six, seven layers deep, until the real reason is on the table.

This works because AI has no agenda. A human interviewer brings hypotheses, reads tone of voice and facial expressions, unconsciously steers toward answers that confirm their assumptions. That's not an accusation; it's a documented phenomenon in qualitative research. The AI follows the answer, not its own expectations.

Then there's availability. A human moderator handles three to four interviews a day, needs breaks, has a calendar. An AI conducts hundreds of interviews simultaneously – at night, on weekends, in the participant's language. That doesn't just change the speed; it changes who can participate.

Qual meets quant: the new middle ground

The boundary between qualitative and quantitative research has always been one of effort. Qualitative meant deep insights, small sample. Quantitative meant broad distributions, no depth. You had to choose.

With AI-moderated interviews, you don't. You can have 200 people go through a 30-minute conversation – in 48 hours instead of six weeks. The result is neither a classic qual project nor a survey, but something in between: qualitative depth at quantitative scale.

Here's how it works for us: a researcher defines the interview guide and research questions. The AI conducts the conversations. The analysis pipeline extracts patterns from the transcripts – semantic similarities, theme clusters, sentiment trajectories. What would take weeks manually is available as a structured report within a day or two.

This shifts the dynamics of research projects. When research no longer costs six weeks and 15,000 euros, the most common excuse disappears: "We don't have the budget right now." Research becomes something you do before every major product decision, not just once a quarter.

When surveys still make sense

Surveys aren't useless – they're a specific tool for specific questions.

Benchmarking works well with surveys. If you send the same NPS questionnaire four times a year and track the trend, you don't need an interview. Standardised metrics like CSAT or CES have their value as seismographs: they show that something has changed, even if they don't show what.

For large demographic studies focused on distributions – how many, how often, which age group – the questionnaire remains the more efficient method. And for screening questions that pre-qualify participants for deeper conversations, short surveys are still the tool of choice.

The question isn't "survey or interview" but "what information do I need?" If you want to know how many of your users are aware of a feature, use a survey. If you want to know why they don't use it, have a conversation.

Try it yourself

It's hard to describe what an AI-moderated interview feels like. Best to experience it firsthand. We're running an open study right now – 20 minutes, no login, straight in the browser.

Frequently asked questions

Can AI-moderated interviews completely replace surveys?

No. For standardised tracking (NPS, CSAT), large demographic studies, and benchmarking, surveys remain the right tool. AI interviews replace surveys where the why matters – anywhere open questions, follow-ups, and contextual understanding are what count.

How valid are the results from AI-moderated interviews?

In a controlled study from the University of Mannheim, AI-moderated interviews produced significantly richer data and zero nonsensical responses when answering identical questions – compared to a ten percent gibberish rate in traditional questionnaires. Participants also rated the interview experience with higher satisfaction than the survey format.

What does an AI-moderated interview cost compared to a survey?

A survey with 1,000 participants costs between 2,000 and 10,000 euros depending on panel and complexity, delivering quantitative distributions. A qualitative research project with 10 to 20 human-moderated interviews costs 12,500 to 25,000 euros. AI-moderated interviews offer qualitative depth at a fraction of traditional interview costs while accommodating hundreds of participants simultaneously.

Do participants know they're talking to an AI?

Yes, and that's by design. Transparency about AI use isn't just a legal requirement under the EU AI Act – it actually improves openness in practice. People speak more honestly with an AI than with a human interviewer, because social desirability bias drops away.